Imagine you were responsible for monitoring the safety of a subway system. Where would you begin? Most likely, you'd start by thinking about the key risks like collision or derailment, contemplate what causal factors like scheduling software and track conditions might contribute to bad outcomes, and institute processes and metrics to detect if those situations arose. What you wouldn't do is blindly apply irrelevant industry standards like testing for problems with the landing gear (great for planes, irrelevant for trains) or obsessively worry about low probability events like accidental teleportation before you'd locked down the fundamentals.

When thinking about real-world scenarios, we're naturally inclined to think about key risks and mechanistic causes. However, in the more abstract world of data, many of our data tests often gravitate towards one of two extremes: applying rote out-of-the-box tests (nulls, PK-FK relationships, etc.) from the world of traditional database management or playing with exciting new toys that promise to catch our wildest errors with anomaly detection and artificial intelligence.

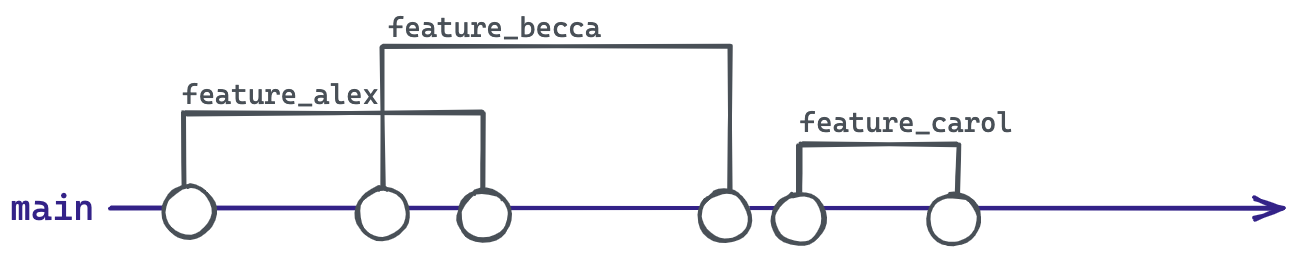

Between these two extremes lies a gap where human intelligence goes. Analytics engineers can create more effective tests by embedding their understanding of how the data was created, and especially how this data can go awry (a topic I've written about previously). While such expressive tests will be unique to our domain, modest tweaks to our mindset can help us implement them with our standard tools. This post demonstrates how the simple act of conducting tests by group can expand the universe of possible tests, boost the sensitivity of the existing suite, and help keep our data "on track". This feature is now available in dbt-utils.